Modernizing our Progressive Enhancement Delivery

For more than a decade here at Filament Group, we’ve been scrutinizing and updating our workflow for delivering broadly accessible, fault-tolerant websites. Much of that time was spent making small, subtle refinements, but there were several moments where we made larger and philosophical changes to the way we deliver sites as well.

In my mind, the first of those came when we began using client-side feature tests to make better decisions about whether—or not!—to apply enhancements on top of an already-usable HTML page. A second shift came from embracing responsive design, as that enabled us to serve a catered, appropriate visual layout to a rapidly-widening spectrum of screen sizes without resorting to any forks in our delivery. More recently, a third leap came from focusing on streamlining the “critical path” to a usable page by removing everything that blocks it from rendering immediately upon arrival.

This week, we’ve put the final touches on some new techniques that helped us further speed up our site load time and dramatically reduce our reliance on network requests. We feel like it will be a game changer for our own site, and will inform our recommendations to our clients moving forward.

A New Milestone

Permalink to 'A New Milestone'This site may not look any different than it did last week (it’s largely identical, really), but we’ve made big changes to the way it’s delivered thanks to two web standards that have recently stabilized in browsers and on servers as well, and they both aim to reduce our reliance and time spent on network requests.

HTTP/2 for speed

Permalink to 'HTTP/2 for speed'First, we’ve updated our server environment to utilize the newest iteration of the web’s beloved HTTP protocol: version 2! “H2,” for short, brings some incredible features such as “connection coalescing” which allows multitudes of file requests to simultaneously share the same TCP connection, and “server-push” which allows the server to smartly respond to requests with not just the requested file, but any other dependencies it deems relevant as well, which means less time waiting for high-latency trips between the client and the server. Browser support for H2 is already very good, and the feature works as an opt-in so that non-H2 browsers can still use our site as they always have in good ol’ HTTP/1.1.

Service Worker for offline & unstable connections

Permalink to 'Service Worker for offline & unstable connections'Second, our site is now offline-friendly! Now, most modern browsers will look for locally-stored versions of our files before reaching out to our web server (this approach is often referred to as “offline-first”). The result is that much of our site will be accessible when many of our users are offline or browsing over unstable networks.

The feature that makes this possible is called Service Worker. It’s a new API we can use that enables parts of our JavaScript to act as a proxy between the browser and the server, intercepting and managing requests and responses, and storing or retreiving files from cache. Service Worker is a better—and admittedly much more complicated—version of Appcache, the notoriously-flawed feature that first enabled offline behavior.

That’s the high-level view of what’s new. Below, we’ll cover some of the technical nitty-gritty of how we integrated these new technologies. If that part is not your bag, thanks so much for reading! Please do give us a holler if you need help designing a speedy and resilient site of your own.

Still here? Alright, let’s dig into how we’ve integrated these features into (or largely, on top of) our progressive enhancement workflow, and the measurable impact they’ve made.

A Technical Overview of What’s New

Permalink to 'A Technical Overview of What’s New'Delivery starts on the server, and server setup is pretty simple. I’ll cover that first.

Server Setup with HTTP/2

Permalink to 'Server Setup with HTTP/2'The files and content of our site are managed by the lovely Jekyll static site generator. When we deploy our site, the files from Jekyll are copied over to a Linux server and served securely over https (note: TLS is required both for H2 and for Service Worker) by Apache. The Apache server has had some nice updates lately, and it now includes great support for HTTP/2.

Contrary to how difficult John wanted us to believe it was for him, enabling “H2” was merely a matter of enabling a module in our virtual host configuration (sorry, John):

Protocols h2 http/1.1

…that’s it! With that change, our site immediately started upgrading most of our users to the new protocol, which made our pages finish loading sooner than they used to, since all requests could now be made in parallel rather than in limited batches at a time. Admittedly, our site does not reference a great deal of assets, so the results from this step are not as dramatic as they’d be on a more media-heavy site.

H2 can be enabled in a variety of server environments now, including NGINX, Node, and probably whatever you are using. There’s a bit more we’re doing with H2 on the new site, but I’ll come back to that after some background on how we’re delivering our HTML files.

HTML Delivery

Permalink to 'HTML Delivery'Our highest priority in the delivery process is to serve HTML that’s ready to begin rendering immediately upon arrival in the browser. Notably, that’s not the way sites typically work. Usually, the browser requests an HTML document, and after downloading that document, it begins parsing the HTML and finds references to CSS and JavaScript files that it must retrieve from the server before it can render the page for the user. The steps involved in this process form a period often referred to as the Critical Rendering Path, and that path lengthens with every additional asset the browser must fetch before rendering the page, or more concretely, each trip to the server and back increases the time a user must wait before viewing the HTML content they already downloaded.

That’s not good, but it’s not an easy problem to fix either. In order to begin addressing this problem, we try to enforce a couple of rules in our delivery:

- Any assets that are critical to rendering the first screenful of our page should be included in the HTML response from the server.

- Any assets that are not critical to that first rendering should be delivered in a non-blocking, asynchronous manner.

Now, in the past (in HTTP/1x that is), our only option for addressing #1 above was to inline all assets in question, meaning any critical CSS would be stuffed into a <style> element in the <head> of the HTML document, and any critical JavaScript (e.g. scripts used for running feature tests and bootstrapping page enhancements) could be stuffed into a <script> element in the <head> of the page. Of course, a typically large portion of the CSS rules in a site’s stylesheet are not critical to rendering one given page on the site, meaning inlining all of those rules in the top of an HTML page would be wasteful. In addition to the waste, inlining that much code would also be detremental to our goals, since the initial download of HTML from the server often only includes about 14kb of compressed text, and CSS files are often larger than that on their own. When inlining, we want to fit as much as we can in that first 14kb, so to reduce the weight of the “critical CSS,” we use tools that extract just the portion of the CSS relevant to rendering the top portion of each template on our site. An automated critical CSS tool will run through each unique template on our site and generate a “critical” CSS file, which it saves to a file that we can include in each HTML file as part of our build.

As our workflow goes, we deliver this combined version of an HTML page to all first time visitors to our site, while asynchronously loading (read: loading without blocking page rendering) all of the other assets that the page references, including the site’s full CSS and JavaScript, which are then cached for later. Subsequent visits to any page on the site receive HTML that references the full assets normally, under the assumption that they’ll be cached and no longer necessary to include inline.

Enter, HTTP/2 Server Push

Permalink to 'Enter, HTTP/2 Server Push'Inlining is a measurably-worthwhile workaround, but it’s still a workaround. Fortunately, HTTP/2’s Server Push feature brings the performance benefits of inlining without sacrificing cacheability for each file. With Server Push, we can respond to requests for a particular file by immediately sending additional files we know that file depends upon. In other words, the server can respond to a request for index.html with index.html, css/site.css, and js/site.js!

In Apache, H2 Push is on by default, and you can specify resources that should be pushed by either adding a link header to the response, or using the slightly better h2PushResource directive. Here’s how that index.html example would look inside an Apache virtualhost or htaccess file:

<If "%{DOCUMENT_URI} == '/index.html'">

H2PushResource add css/site.css

H2PushResource add js/site.js

</If>

In English that says, “if the path to the responding document is /index.html, push these additional css and javascript files.” On our website, we’re using Server push like this to immediately send all of the assets that a page needs to render, including the small critical files we would have inlined in the HTML, had we been using HTTP/1.1. Let’s look at that in code.

Our H2 Push Configuration

Permalink to 'Our H2 Push Configuration'Every page on our site has a short list of assets (scripts, stylesheets, fonts, images) that they all reference, so we push those on the first visit to any HTML file:

<If "%{DOCUMENT_URI} =~ /\.html$/">

H2PushResource add /js/dist/head.js?01042017 critical

H2PushResource add /js/dist/foot.js?01042017 critical

H2PushResource add /css/dist/bg/icons.data.svg.css

H2PushResource add /css/type/lato-semibold-webfont.woff2

H2PushResource add /css/type/lato-light-webfont.woff2

H2PushResource add /css/type/lato-lightit-webfont.woff2

H2PushResource add /css/dist/all.css?01042017

H2PushResource add /js/dist/all.js?01042017

H2PushResource add /images/dwpe-bookcover-lrg.png

H2PushResource add /images/rrd-book.png

</If>

Each unique template on our site also has a unique critical CSS file that should be pushed with it, so we have conditional push directives for the pages that use each of our templates as well:

<If "%{DOCUMENT_URI} == '/portfolio/index.html'">

H2PushResource add /css/dist/critical-portfolio.css?01042017

</If>

<If "%{DOCUMENT_URI} == '/code/index.html'">

H2PushResource add /css/dist/critical-code.css?01042017

</If>

And lastly, we use a cookie to make sure we only push assets the first time a given user visits our site. Ideally with H2, the browser should be able to issue a cancel to the server when it tries to push assets that the browser already has in cache, but in practice this is unfortunately not always true, and we saw the server occasionally re-push cached assets before the browser could say “no thanks.”

In the future, Cache Digest lists may be sent with request headers so that the server can be informed of the files a browser has in cache. For now though, we can mimic this by setting a cookie on first visit; if that cookie is already set, we don’t push.

Readying our HTML Templates for Push (or not!)

Permalink to 'Readying our HTML Templates for Push (or not!)'Within our HTML templates on the server side, we’re able to use environment variables to detect the version of HTTP that is in play on a given request. With that knowledge, we can configure our HTML to either inline our critical files (if it’s HTTP/1.1), or reference them externally knowing they’ll be pushed along with the HTML (if it’s HTTP/2).

You can check the protocol most any server-side language such as PHP, or whatever you happen to use. In the old trusty SSI syntax we happen to use on this site (remember SSI!?), our H2 detection checks look like this:

<!--#if expr="$SERVER_PROTOCOL=/HTTP\/2\.0/" -->

<link rel="stylesheet" href="/css/dist/critical-portfolio.css?01042017">

<!--#else -->

<style>

<!--#include virtual="/css/dist/critical-portfolio.css?01042017" -->

</style>

<!--#endif -->

We apply this same logic for the few critical files that we’d normally include inline, like the small bootstrapping JavaScript we use in the <head> of our page, and the JavaScript we use to progressively apply webfonts once they finish loading.

Seeing H2 in action

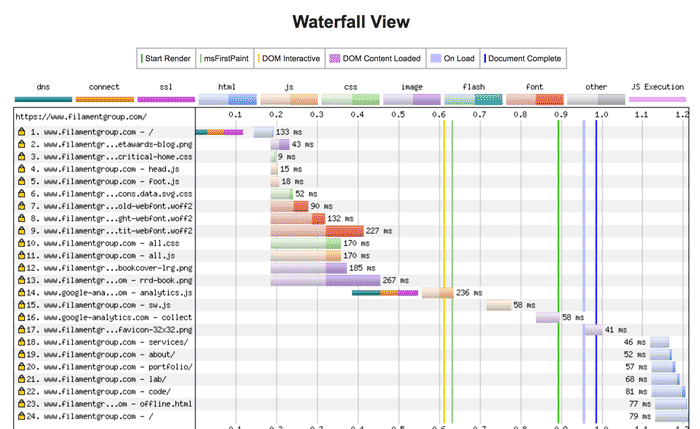

Permalink to 'Seeing H2 in action'By running our page through WebPageTest.org, we can see our H2 optimizations working. Check out this pile of parallel requests in the first 600 milliseconds of our page loading (which happens to be the time it takes to get a usable page on our site, on a typical wifi connection).

Notably, we’re not currently using a CDN on this site (though we do recommend using one), so our request times vary depending on physical location in the world. We also spend more time in our DNS and SSL handshake than we would like, so that could use some tuning as well. But for now, this feels plenty fast and resilient.

There are more steps to our page enhancement process, but that covers the parts that were impacted by updating to H2. As I mentioned early on, you can read about our holistic page delivery workflow (before these new H2 improvements) in Delivering Responsibly

Our Service Worker Implementation

Permalink to 'Our Service Worker Implementation'In addition to the H2 update, we’ve integrated a Service Worker as well. The Service Worker API is the new suite of features that enable our sites to work offline, make smarter caching decisions, and be more tolerant of inconsistent network connectivity. If Service Worker is new to you (it was for us!), there are many great primers out there. Personally, I found Lyza Danger Gardner’s post Making a Service Worker helpful, in addition to some good old view-source reverse engineering of our good friend Adactio’s site (Thanks Jeremy!).

Caching & Offline Strategy

Permalink to 'Caching & Offline Strategy'Our use of service worker is pretty standard. For starters, on first visit we use service worker to cache the shared assets listed in our H2 server push list above so that they’ll be available locally the next time they’re requested. However, in this step we did run into a small hangup. The service worker begins fetching files once the page has finished loading, so by the time the worker fetches its assets, they’ve already been downloaded once and placed in cache. We wanted to ensure that the worker pulls these files from the browser cache instead of requesting them fresh from the web, but this is a touchy process, as even minor differences between the first and subsequent request headers can instruct the service worker to ignore an already cached file and request a fresh copy. In our case, we had mistakenly set our vary header to "Cookie" on all of our file requests, which tells the browser that these assets should be expected to vary their response content based on the presence of cookies. In practice, we only wanted vary headers on our HTML files, since those are the only assets that do indeed vary based on cookies. But since we had this header set on all of our files, all of the requests that the service worker made ended up carrying cookies that were not present the first time the same requests were made, and it wouldn’t fetch from browser cache. This was resolved by setting vary only on our HTML files, but I wanted to note it since it hung us up.

In addition to the static assets, we also fetch the HTML for the top-level pages of our site so that the primary navigation works if you were to lose connectivity after loading the first page, and a fallback offline page that will be served if the user is offline and tries to access a page that is not in cache. When we fetch these pages, we make sure to include request credentials so that the cookies are carried and the worker reaches out to the server to get versions of our HTML pages that do not include the Critical CSS.

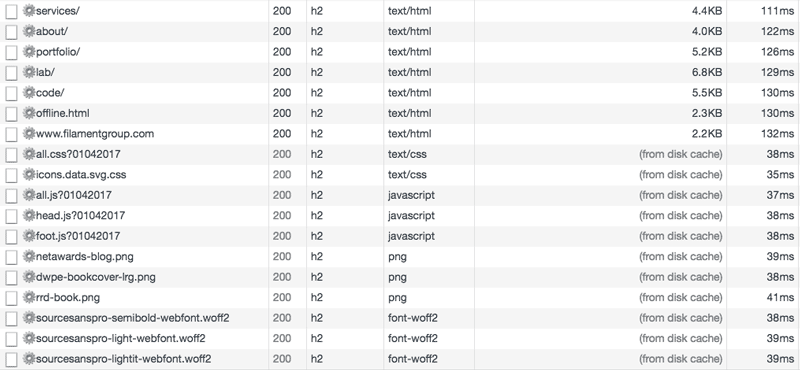

Here’s a view from Chrome’s Network tab showing the initial requests made by our service worker. Note that the top-level pages are requested from the server, while the assets are fetched from browser cache:

Beyond the shared assets and top-level pages, the service worker will cache all of the pages and files you request as you browse around the pages of our site. If you happen to drop offline, if a page is already in cache, it will load just fine. If it’s not in cache, you get the fallback page that suggests you’ve seemingly lost connectivity. Lastly, we borrowed a neat little trick from Adactio in which the service worker cleverly returns an SVG image that says “offline” for any image requests that fail when the user is offline.

Progressive Web Appy Goodness

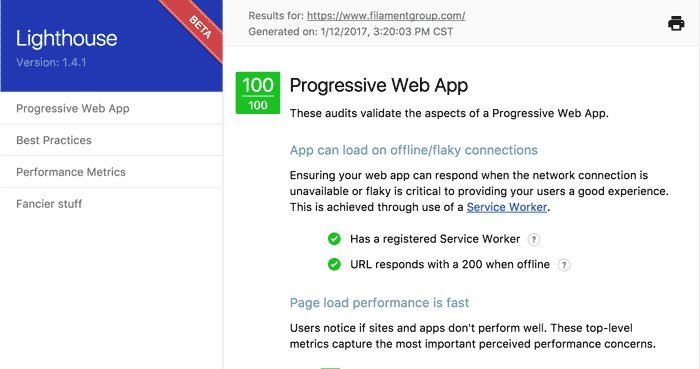

Permalink to 'Progressive Web Appy Goodness'In addition to H2 and service worker, our site references a web app manifest file, which provides a device some meta information (site name, theme colors, app icons, etc.) about our site in the event that a user decides to “install” it as an app. With all these pieces in place, our site can be deemed a bonafied Progressive Web App. Just looks at this perfect score on Google’s Lighthouse validator tool:

As a progressive web app, this site will prompt users in supporting browsers to ask if they would like to install our site as an app. So far, “installing” our site just means that it gets a little app icon on the device’s homescreen, which isn’t much different than bookmarking any site to your homescreen. But there are other features some devices support that we can use as well, such as sending notifications to the user (perhaps when we publish a blog post?). We expect that additional app-like features will become available to progressive web apps over time as well.

Thanks!

Permalink to 'Thanks!'Thanks for hanging in here for the long haul. We’re excited about the benefits that these new technologies provide, and can’t wait to apply what we’ve learned on our own site to better serve our clients. For helping with questions, code examples I’ve cribbed, and reviews of this post, I’d like to give a big thanks to Jeremy Keith, Ethan Marcotte, Jake Archibald, Lyza Gardner, Pat Meenan, Andy Davies, and Yoav Weiss.

As always, if you want to chat about this post, you can find us at @filamentgroup on Twitter. (And of course, if you’d like us to help you work through a challenge like this for your company’s site or app, get in touch!)